Customer discovery is the process of speaking to people to understand their problems so that you can uncover opportunities to improve your product.

The term traces its roots back to Steve Blank and Bob Dorf. They wrote a book called ‘The four steps to the epiphany’ that explains how to apply the scientific method to building startups.

Customer discovery was the first of the four steps. The idea was to stop thinking about your product and “get out of the building” so that you can speak to people about their problems instead. The goal is to avoid spending loads of time building things nobody wants.

Traditionally, product work has been confused with pumping out new features. New features are fantastic but only if they’re useful.

Useful is a relative term.

It relies on a shared understanding of who you’re building the features for and what they’re trying to do with them. How’re you supposed to build something useful when you don’t know what the problems are?

Good discovery work answers these kinds of questions and helps teams understand what better means to the people who use their product.

When a team doesn’t share a clear understanding of the problems everyone can feel stuck. You begin wasting time building things no one uses. There’s no conviction on what to build next, and you’re constantly looking to competitors for ideas to steal.

Once people start to understand what the problems are, building useful stuff is easy. You stop worrying about whether you’re releasing features, and you’re more interested in whether or not they’re solving problems.

Customer discovery questions

The only scripted question I ask is:

Tell me about why you started using [our product]?

I do customer discovery work in the context of existing products and I only interviewing existing users.

Traditionally, discovery work is done before you build a product. There’s lots of great writing out there about that sort of discovery work. I’m going to talk about discovery in the context of improving retention and making an existing product better.

The goal of an interview is to understand the problems people are trying to solve with your product, so that you uncover opportunities to make it better as solving those problems.

The point of this opening question is to elicit a story. You want people to paint the picture of where they were before they started using your product and where they were hoping your product would get them.

If you get a one-sentence response (“I downloaded you app to get fit”) then you can follow the question with

What’s the hardest part about [X]?

X being the core problem your product helps people solve.

Most of the time you can recycle a one sentence response into the follow-up, “What’s the hardest part about getting fit?”

I’m not big on using scripts. They can be helpful. I usually have a list of fallback questions if the conversation stalls. People will only tell you a story if you demonstrate that you are genuinely interested in listening to what they have to say. Running through a list of questions doesn’t invite someone to share a story with you.

If you’re just after a bullet points to a list of question then you should send out a survey instead. Much less hassle on both ends.

Rather than relying on a script you’re listening for emotional highs and lows in the conversation. When you see someone is talking about something they care about, redirect the conversation with prompts like:

- Tell me more about that.

- Please say more.

- And what else?

- What happened after that?

I know I said that I am not big on scripts but there is one question that I like to end every conversation with:

Is there anything else I should have asked?

This is a great question because sometimes people understand what you are trying to do but you haven’t given them an opening to say what they want to say. Other times you can sense that the conversation isn’t over yet and it’s because they have questions. Worst case scenario, they just say no.

How to do a customer discovery interview

I can go long stretches without doing interviews and when I jump back into them I’m always a little rusty. Now I have a little game that I play that helps me hit the ground running. I score each conversation with a simple point system.

2 negative points and 2 plus points. Easy to remember while I’m talking. Then I score the recording afterward to see if I’m improving between interviews.

-1 You pitch, you lose.

If you try and push a feature or start talking about your product then the conversation becomes a sales call and stops being an interview.

An interview is about the customer, not your product.

The moment you start pitching a feature, people will gravitate towards telling you what you want to hear. If you have nothing to sell, people don’t know what you want to hear, so they can’t lie to you.

-1 Don’t Interrupt People

You can’t interrupt people. Ever.

This is where I always lose the most points.

When someone stops talking, the best thing to do is count to five in your head. I’ve never made it past 3. The idea is to create a mildly uncomfortable vacuum that elicits valuable follow-up information.

Conversely, when people are talking and you have an important question. Make a note of it so that you remember to come back to it later.

+1 Talk Specifics

Hypotheticals are toxic shiny objects. They sound great and mean nothing. People are terrible at predicting their own behaviour. Instead, you can only talk about specifics that have happened in the past. It’s much harder for people to lie about specifics.

When you start talking about what someone might do, people want to tell you the *correct* answer. Regardless of how true it is. It’s not that people want to lie to you, it’s just what we do in polite conversation. It’s the path of least resistance.

Every time you shut down a vague, hypothetical statement and redirect it to something specific in the past you get a point.

+1 Summarise And Then Ask

When you can’t interrupt people, it can be hard to get a wandering conversation back on track.

One way to do this is to summarise the important bits of what people said when they stop talking. This re-aligns the conversation to what’s important to you. It also helps them reflect on what they said, and clarifies any misunderstandings.

Every time you summarise what someone says before proceeding, you get a point.

That’s it.

Finding people to speak to

You don’t need to speak to lots of people, nor do you need to speak to them all at once. Two or three people a week is more than enough to start with.

Recent customer support wins are always a good place to start. You don’t need a complicated reason to reach out to people that have just had a great experience with customer support. Explain that you want to improve the product and you’d like to better understand how they use it. Clarifying that it will be a short call always helps.

In addition to following up on past interactions, you can begin closing out successful interactions by asking if they’d be open to schedule a quick conversation with the product team to improve the product.

The success rate on converting support calls is usually pretty high. The problem is that you don’t control who gets in touch or how often. Eventually, you will need to be able to pick who and you talk to people.

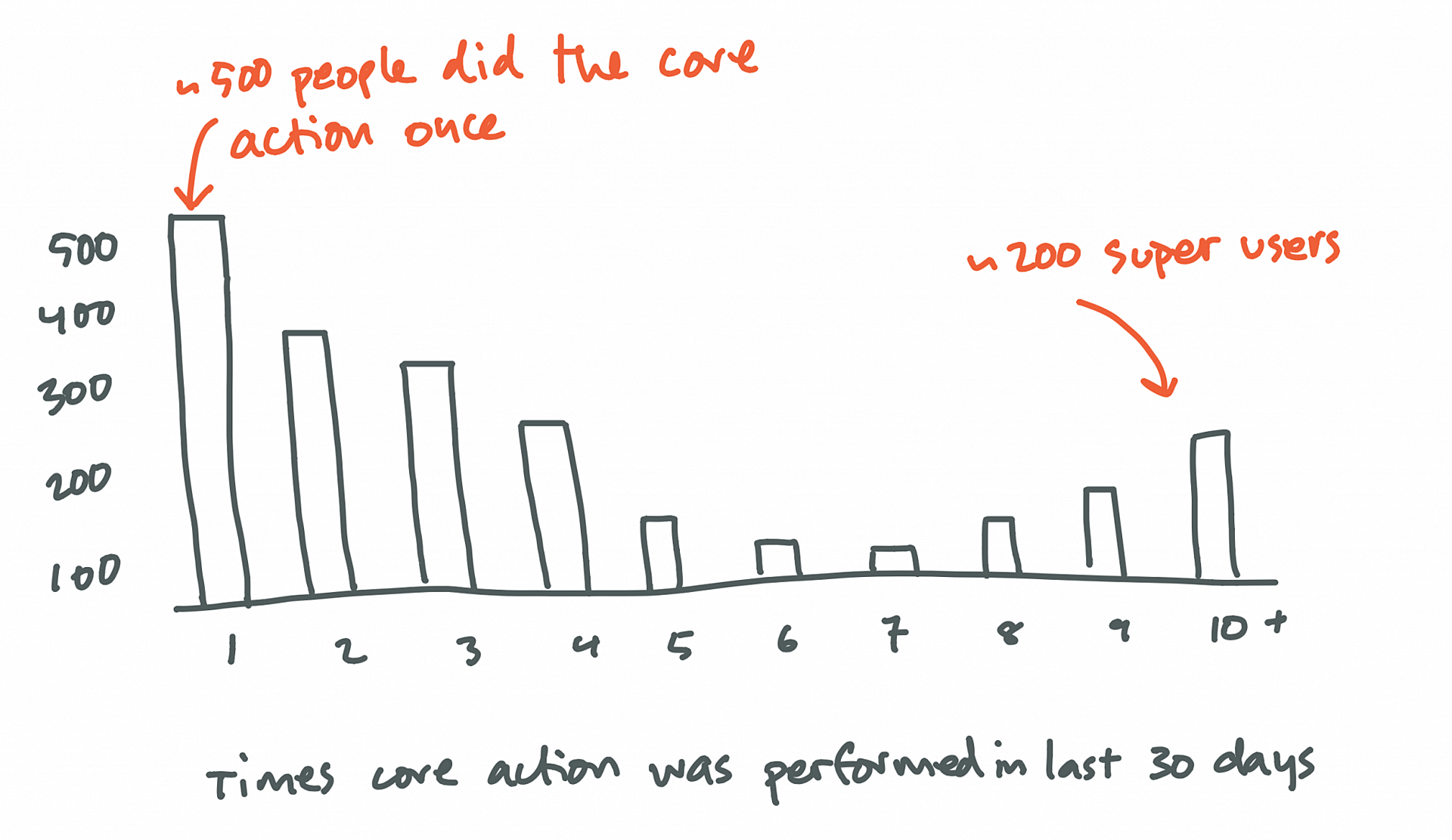

When I don’t have a specific question and I’m just listening for opportunities to improve the product then I look at last month’s activity and plot out the number of times each person performed our core action. You’ll end up with a bar chart of how many people performed how many actions.

Those who did it once or twice will be on one end, your superusers live on the other end. Filter out anyone who signed up less than a month ago and then reach out to 10 or 20 people in the top and bottom 5%.

What I’m trying to understand is the differences in the way that people on either end of this spectrum think about and use the product. Speaking to 5-6 people from each group is usually enough to get a sense of the key points of contrast in the spectrum of usage on your product.

One final approach I’ve had success with is doing in-product surveys. NPS scores and those little satisfaction ratings that show up in the corner of people’s screens. You can end a quick survey like this with a request to schedule a call.

Organising the customer discovery data

When I first started doing customer interviews, it became clear that I’d be accumulating lots of notes and recordings of interviews I didn’t know what to do with.

There were two of us doing interviews at the time. We would do a bunch of interviews and then share takeaways with each other. Whoever was doing the interview was still a massive bottleneck to the actual insights.

We tried recording sessions when we got permission, but going through every recording took too long and was unsustainable. I’m going to share the process I’ve settled on since.

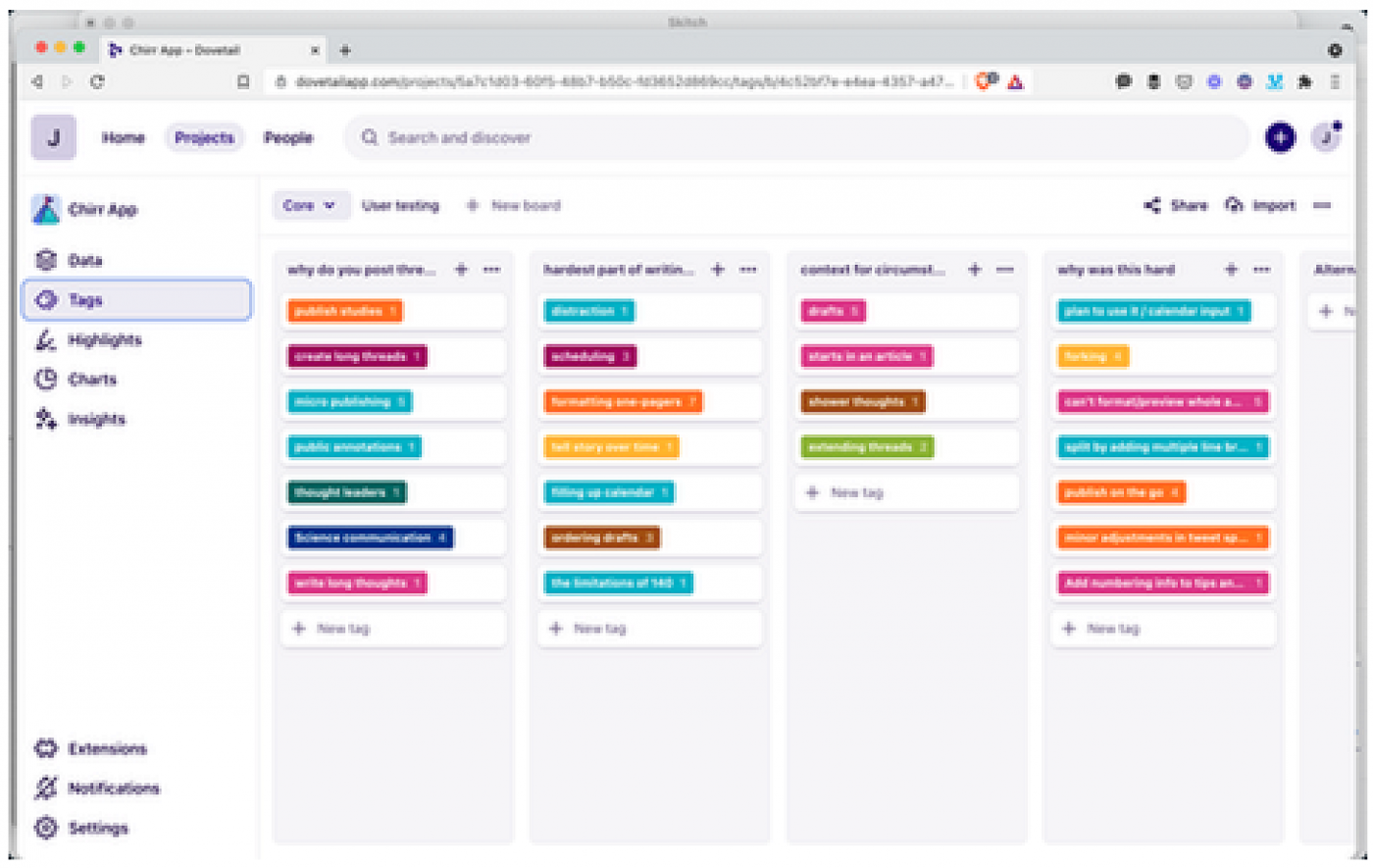

I’ve come to rely on a tool called Dovetail. I have no affiliation. I’m just love their product. You can probably use a free Kanban board to replicate most of this, but Dovetail makes the whole process a delight.

The raw input here is a transcript of your interview (or notes as a fallback).The idea is to go through the interview, line by line and highlight points of note. I don’t think there is a correct way to do this, but my points of note are insights, opportunities and verbs.

An insight is anything that resonates with you (explicit or implied). How do you know what’s relevant when you’re not sure what you’re trying to figure out? Close your eyes at the end of an interview, the two or three bits that stick out most vividly are your key insights.

An opportunity is more practical. Julia wants a way to listen to the audio at double speed. Listening to what people ask for is not to same as building everything they want. Make a note of what people ask for so that you can start to see patterns in the underlying problems.

Then there’s verbs. If someone talks about the highlighter feature acting wonky, tag that under ‘highlighting’. If people bring up the text being too small, tag that under ‘reading’. When people talk about your product, capture the action at the centre of the conversation.

The goal here is to to cluster all your notes around the verbs your users use to think about your product experience. I think of our product in terms of feature A, B, C and D. They’re great features but people only think about the product in terms of reading , writing and highlighting. Sometime’s there’s alignment here, most of the time there isn’t. The latter is all that matters.

Insight, opportunities and verbs. That’s how I organise interview data. There’s going to be a lot of overlap, but just relying on verbs doesn’t let you capture general insights and opportunities. Dovetail lets you tag the same thing in multiple ways so that’s not a problem.

To keep track of all this you can organise everything in columns. I start with 4: Insights, opportunities, verbs and one for my research question. When clusters begin to appear I pull them into their own column. So I start with 4 and then let the rest form organically.

For example, if 7 of the verb highlights are about editing then I will make a new ‘editing’ column and move everything over. Then I rename the tags to describe what it is about the editing experience they’re highlighting (slow, no-redo button, autosave, placement, etc).

This is fundamentally a qualitative database. A place you can turn to when you want to know the customers perspective. Organisation by verbs means you know how people group the experience in their heads. Now you also know what most people care about when it comes to ‘drafting’.

The process scales to small teams well. Double entry work best in groups. Transcripts gets analysed then reviewed by another before it’s ‘done’. Helps everyone stay on the same page (and minimises bias). Double entry is a luxury few teams can afford though.

I’ve also learned that exposing stakeholders to 2 hours of raw research every 6 weeks is key. If you’re interviews are 20-30 minutes long then shortlist 4-5 for people to watch every 6 weeks. I didn’t pull that number our of a hat, learned it from Jared Spool. It works.

Customer discovery and doing user interviews is about grounding everyone’s decision making process in your customer’s perspective. A minimum of 2 hours inside your user’s heads every 6 weeks makes collectively judging whether stuff will be useful becomes much easier.

Being able to recall actual conversations when you’re making important decisions means you never have to rely on bullshit personas ever again.

How does customer discovery lead to real product improvements?

I’ve found customer research helps me improve product in three ways: It allows me to say no to stuff, it helps me map out the problem space, and it defines useful criteria for a solution.

Saying no to stuff

It’s easy to get lost when you’re building features. Grounding yourself in your user’s perspective can let you know when you’re barking up the wrong tree.

For example, at Chirr App we let you compose twitter threads. We were considering modifying Twitter’s tweet box with our chrome extension so that you could write threads inside the Twitter interface. After speaking to people and listening to how they use our product, it became clear that people value creating content without the distraction of Twitter.

Modifying the native tweet box was easy to get excited about. It sounded like a wicked idea. If we invested a chunk of time into making it happen, we would have let all our lovely users know that we’re completely disconnected from how they use our product.

Mapping out the problem space

I also use customer research to map out the problem space. It’s easy to focus on solutions, people instinctively do too much of this in product teams. Sometimes you need to be able to put all your solutions to one side and ask what problems people care about.

When I process discovery interviews I have four columns: insights, opportunities, verbs and one for my primary research question. Verbs are the things people talk about doing in a product. If clusters or themes begin to appear then I pull the out into their own column.

For example, if 7 of the highlights in the verbs column are about ‘editing’ then I will make a new ‘editing’ column and move everything over. Then I rename the tags to describe what it is about the editing experience they’re highlighting (it’s slow, there’s no-redo button, needs autosave, wonky placement, etc).

This means there’s always a place I can turn to when I want to know what customers care about. Instead of worrying about whether we’re going to build this feature or that one, you can look at the problem space and see that people think of the product in terms of searching, reading, sending and highlighting. This perspective lets you have a conversation about which one of these experiences you want to improve next. Mapping the problem space makes it easier to think of product improvements in terms of outcomes to the end user experience.

This isn’t some kind of formula, There are always business constraints and realities you have to work within. Having the problem space mapped out and being able to talk about it makes it easier to balance a business-needs-only approach with the stuff your users care about.

Useful criteria for a solution

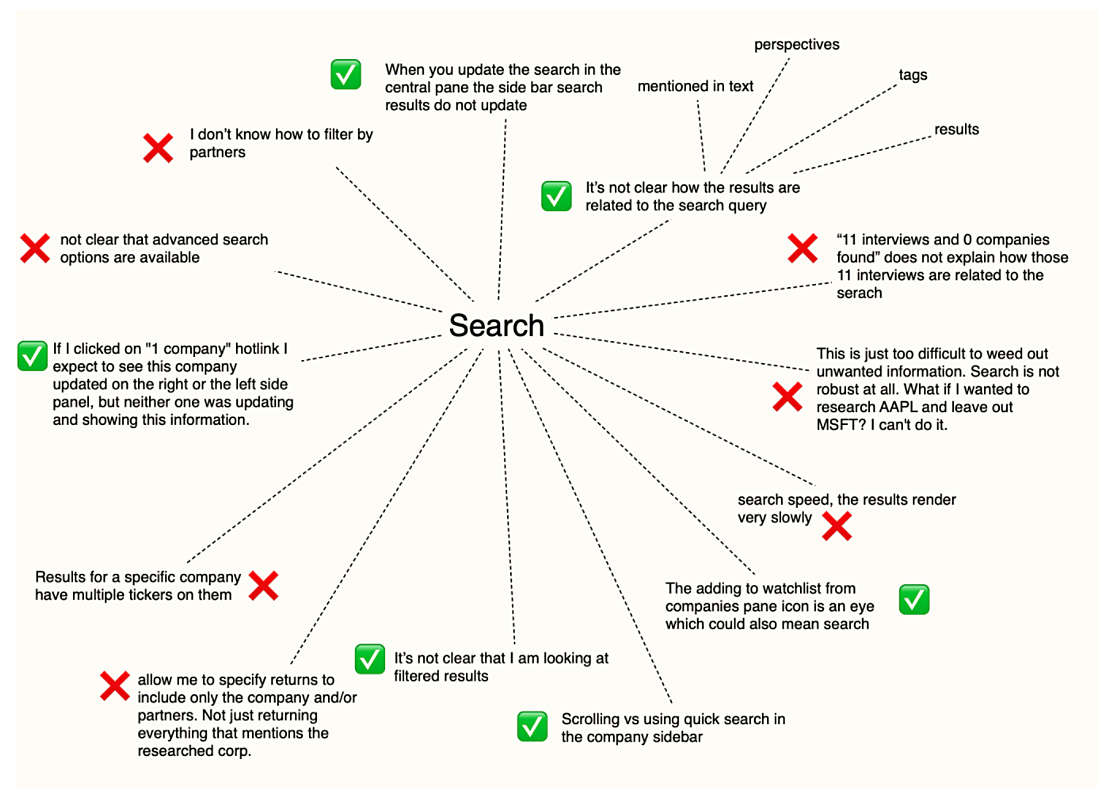

Let’s say we’ve decided that the search experience makes the most sense to focus on next. Now we have a list of all the points of friction in the current search experience. Each of the highlights in the “Search” column is a rough edge a real person brought up in conversation with you.

When you don’t understand the forces acting on a problem then you inevitably end up casting the net wide. The idea is that if you cover enough territory you’re bound to solve the problem. A wide net means building more stuff, which means more moving parts, and that means more things to maintain. You know what I’m talking about.

Understanding all the places people butt up against the current experience exist means I can reference them when I sit down to work on a solution. I’ll add all the feedback I can get from the customer support team, mix it with any business requirement and I end up with a diagram of all the forces that I know are influencing the design space.

Once I have all my bits the next step is to figure which bits to focus on first. Jason Fried has a lovely essay where he talks about the obvious, the easy and the possible. I am yet to discover a better way of thinking about the tensions at play here.

You can’t make everything obvious. The more things that are, the less obvious they become. As a general rule, the more often something happens, the more obvious it should be. Stuff that just needs to be possible can be tucked away. Work on figuring out where the easy stuff goes once you’ve dealt with the obvious stuff.

A solution won’t always address all the forces. It’s good to be aware of which elements are not addressed. Sometimes they don’t need to be, other times you can pick them up in a separate sprint.

Without understanding these forces, you have no criteria by which to judge the success of a solution. Listening to people and documenting what they care about lets you reference the important stuff when you need it. Rich context like this is the antidote to a messy scope. You can stop speculating about X, Y and Z because you know exactly what to include and what you can safely omit.

That’s it, that everything I have to share on my customer discovery journey so far.

Customer research helps you improve products by helping you say no, mapping out the problem space so you can direct your attention towards meaningful outcomes, and by defining clear criteria for solutions.

If you’ve been speaking to people and then not having a way to connect the research to the product roadmap, hopefully some of this will help you think about ways you can organise your research so that you can rely on it to make better product decisions.

More relevant links on customer discovery stuff:

- The four has since been revised into a new book called ‘The Startup Owners manual’.

- If you’re interested in the more traditional customer discovery work that happens before you build a product then checkout The Four Steps to the Epiphany by Steve Blank and Bob Dorf. The four steps has since been revised into a new book called ‘The Startup Owners manual’.

- The idea of applying the scientific method to building a startup was the later popularised in Eric Reis’ ‘The Lean Startup‘.

- This super short customer discovery tutorial by Justin Wilcox is an oldie but it’s on point 🎯.

- 💸 I cannot talk about the customer interview process without referring to Rob Fitzpatrick. His book is the single most useful resource I have ever read on the matter. The Mom Test. It’s a short book, just read it, you can thank me later.

- 💸 If you don’t have time to read Rob’s book he’s also put together a fantastic Udemy course that covers all the important bits.

- Eric Migicovsky has a great video on the basics of talking to users in YC’s startup school library.

- 💸 You can also look into learning how to start conducting jobs-to-be-done interviews.

- 💸 If you want to go deeper on interviewing product in the context of existing products, one of the best courses I’ve done on customer discovery interviews is by Terresa Torres. She has a 4-week course, full of practice sessions, and it’s amazing.

- Dovetail is great for organising customer discovery research 👍

- Finally, other post that I’ve written that leverage a lot of this initial research in product messaging, the website copywriting and the writing design for the interface.